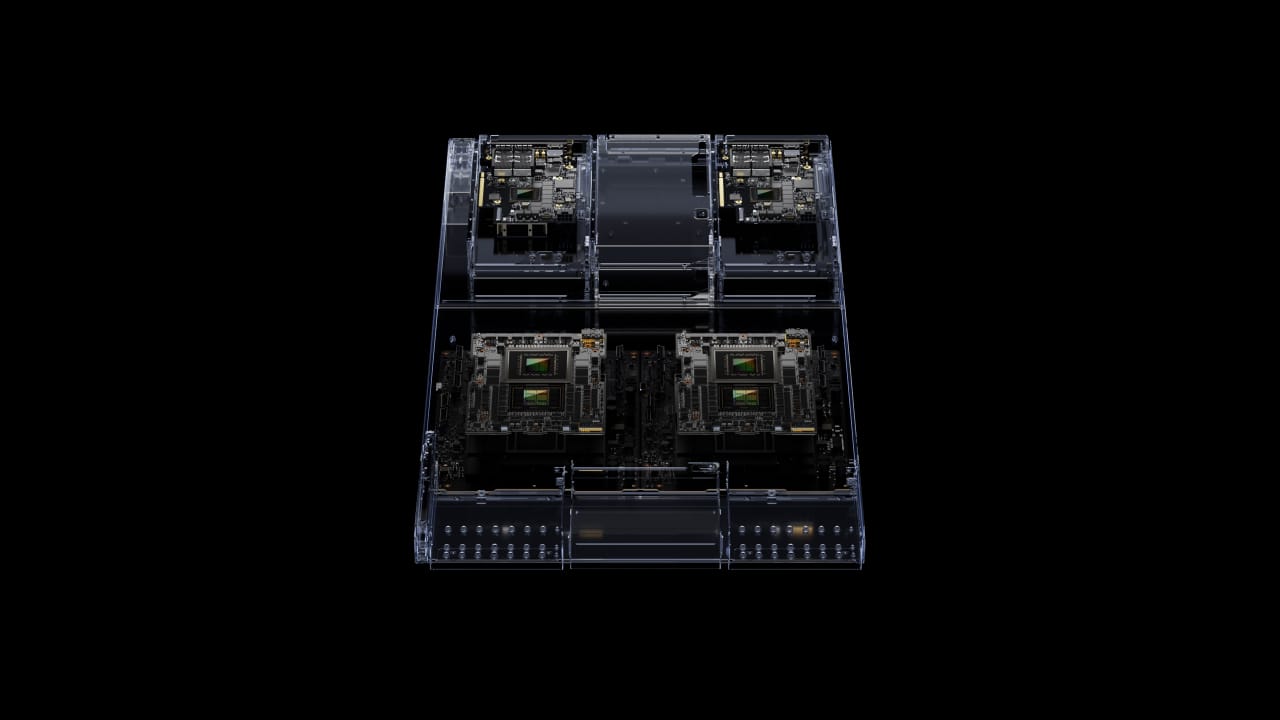

In a blaze of innovation, NVIDIA has propelled the realm of accelerated computing and generative AI into uncharted territory with the introduction of the GH200 Grace Hopper™ platform. This trailblazing platform is underpinned by the cutting-edge Grace Hopper Superchip, harnessing the unparalleled power of the world’s inaugural HBM3e processor.

Built to surmount the challenges posed by the most intricate generative AI workloads, including but not limited to vast language models, intricate recommender systems, and expansive vector databases, the GH200 platform is poised to redefine the landscape of computational performance. Boasting an array of configurations, the GH200 has been meticulously designed to cater to the distinctive demands of the burgeoning age of AI.

Central to the GH200’s impact is its dual configuration, offering an unprecedented surge in memory capacity and bandwidth, dwarfing its predecessors. With a single server featuring 144 Arm Neoverse cores, a staggering eight petaflops of AI performance, and a colossal 282GB of HBM3e memory technology, the GH200 is nothing short of a revelation.

Jensen Huang, the visionary Founder and CEO of NVIDIA, emphasized the significance of addressing the escalating need for generative AI solutions in data centres. “The new GH200 Grace Hopper Superchip platform delivers this with exceptional memory technology and bandwidth to improve throughout, the ability to connect GPUs to aggregate performance without compromise, and a server design that can be easily deployed across the entire data centre,” he enthused.

Underpinning the GH200’s prowess is the Grace Hopper Superchip, a true testament to NVIDIA’s dedication to engineering marvels. The interconnectivity of Superchips through NVIDIA NVLink™ unlocks boundless collaborative potential for deploying the monumental models integral to generative AI. This seamless synchronisation affords the GPU uninhibited access to CPU memory, giving a staggering 1.2TB of rapid memory in dual configuration.

Of equal significance is the transformative impact of HBM3e memory technology, accelerating performance and unlocking the power of generative AI. Demonstrating a mind-boggling 50% speed boost over conventional HBM3, this innovation empowers the GH200 platform with a combined bandwidth of 10TB/sec. This translates to the capability of running models 3.5 times larger than its predecessor, whilst heralding a threefold enhancement in memory bandwidth performance.

This revelation arrives against the backdrop of an ecosystem primed for the Grace Hopper platform’s adoption. Notable manufacturers have already embraced the Grace Hopper Superchip, heralding the democratisation of this technology.

The next-generation Grace Hopper Superchip platform, fortified with the dynamic HBM3e, seamlessly aligns with the NVIDIA MGX™ server specification, fostering accessibility and versatility across a spectrum of over 100 server configurations.

NVIDIA’s stride into the AI hardware frontier, with the GH200 at its vanguard, counters the spirited competition in the AI hardware arena. With a commanding market share of over 80%, NVIDIA’s supremacy is a testament to their GPU-centric approach. This strategic alignment has made their GPUs, such as the H100 and A100 chips, the preferred choice for the extensive AI models.

The GH200’s unveiling not only signifies a technological leap but also addresses the nuances of AI model handling. As the AI workflow involves both training and inference phases, the GH200 demonstrates its prowess by excelling in the inference stage. Boasting an augmented memory capacity, this processor caters to the demands of extensive AI models, facilitating a smoother, more streamlined experience.

This pivotal distinction positions the GH200 as a catalyst for lowering inference costs, rendering larger language models accessible to a broader spectrum of applications. NVIDIA’s GH200 is indeed emblematic of a pivotal moment in AI computing. With a commitment to innovation and a profound understanding of industry dynamics, NVIDIA continues to propel the tech world into uncharted territories.

The GH200’s imminent arrival in the second quarter of next year signals the dawn of a new era, one characterised by heightened AI efficiency, ground-breaking memory capacity, and a resounding commitment to innovation.